pip install open-agent-specThen run oa --version

Open Agent Spec

AI agents as code | define once in YAML, run anywhere.

AI agents are fragmented across frameworks and runtimes. Open Agent Spec defines them once, declaratively, and runs them anywhere via the oa CLI.

Tool use, spec composition, and a test harness | all from YAML.

Native tools, MCP servers, or custom Python, declared in the spec.

tools:

reader:

type: native

native: file.read

tasks:

summarise:

tools: [reader]

prompts:

system: "Summarise the file."

user: "Read {path} and summarise."Delegate tasks to shared specialist specs. Reuse without duplication.

tasks:

summarise:

description: Delegated summariser

spec: ./shared/summariser.yaml

task: summarise

sentiment:

description: Delegated sentiment

spec: ./shared/sentiment.yaml

task: analyse_sentimentData dependencies with depends_on. Eval cases with oa test.

tasks:

extract:

description: Pull key facts

output: { facts: string }

summarise:

depends_on: [extract]

description: Summarise facts

output: { summary: string }

# oa test --spec agent.yamloa CLI — available for Python and Node.js.

Features

Declare data dependencies with depends_on. Output from one task flows automatically into the next. Linear, declarative, and strictly not an orchestration engine.

Declare tools in the spec. Built-in tools (file.read, http.get, …), any MCP server via JSON-RPC, or your own Python class. No SDK required.

A task can delegate its implementation to another spec with spec: + task:. Build coordinator specs that reuse shared specialists, zero duplication, full traceability.

Run eval cases against any spec with oa test. Assert on task output fields. Make your agents verifiable before you ship them.

All LLM calls go through a thin HTTP interface. OpenAI, Anthropic, Grok, xAI, Codex, local, or custom, swap engines with one line. No OpenAI SDK. No Anthropic SDK.

Attach output contracts to tasks with the behavioural-contracts library. Validate required fields, confidence scores, and custom rules, after parsing, before returning. Degrades gracefully when not installed.

Run the same YAML specs from Node.js with no Python required. @prime-vector/open-agent-spec on npm — same oa:// registry, same depends_on chaining, same spec format.

Spec Registry

Browse all specs →Pull shared, versioned agent specs directly from the registry using the oa:// shorthand. No copy-paste. Just delegate.

Summarise text and extract key points.

oa://prime-vector/summariserLabel tone as positive, negative, neutral, or mixed.

oa://prime-vector/sentimentClassify text into runtime-provided categories.

oa://prime-vector/classifierExtract keywords and phrases ordered by relevance.

oa://prime-vector/keyword-extractorReview code for bugs, security issues, and improvements.

oa://prime-vector/code-reviewerThe Problem

Today, AI agents are often locked inside frameworks and hidden in SaaS dashboards. They're tightly coupled to specific runtimes, hard to version and review, and rarely portable across engines.

| There is no standard way to define an agent declaratively. Open Agent Spec solves that.

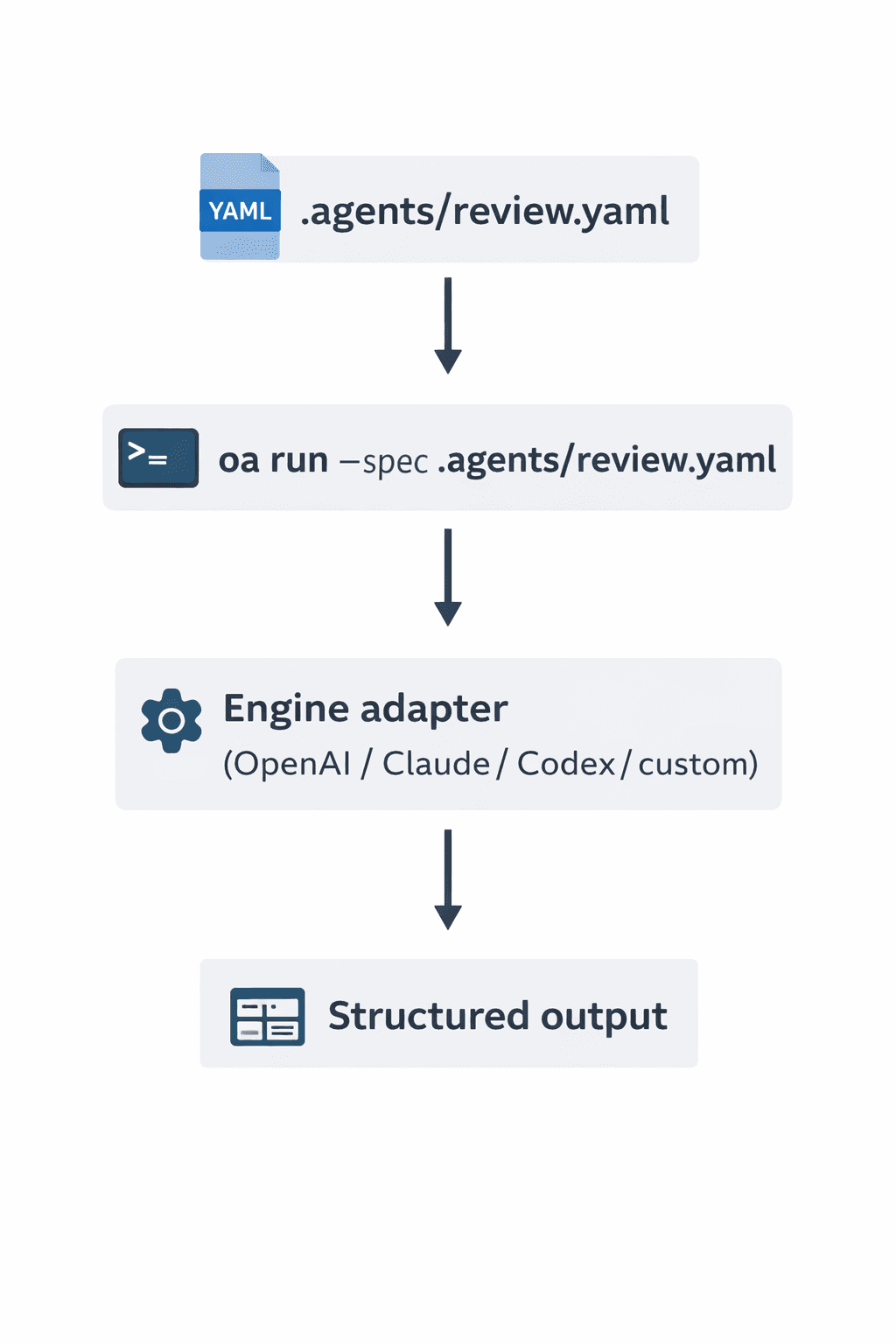

How It Works

- Define your agent in YAML using Open Agent Spec.

- Run locally with

oa run --spec .agents/agent.yaml. - Trigger from CI or GitHub Actions using the same spec.

The OA CLI handles validation, prompt rendering, and engine selection, then normalises outputs to match your declared schema.

There are Two 'Main' Ways to Use Open Agent Spec

Run Agents Directly

Execute specs without generating code. Ideal for CI and repo-native execution.

oa run --spec .agents/review.yaml \ --task review \ --input pr.json

Generate Agent Code

Scaffold working agents when you want code you own and can customise.

oa init --spec .agents/review.yaml \ --output ./agents/review

Agents belong in your repo. The OA CLI reads .agents/*.yaml, validates them, and uses engine adapters to run your agents.

A single spec can fan out into multiple sub-agent processes. The OA runtime routes each task to the right engine via adapters.

What Open Agent Spec Is Not

- Not a framework.

- Not an orchestration engine.

- Not tied to any model provider.

It's a declarative standard | a thin, portable layer on top of any runtime.

Agent as Infrastructure

Just as Terraform defines infrastructure, Open Agent Spec defines AI agents. Specs are version-controlled, reviewable, and portable.

Specs describe agents, not the web stack.

OpenAI, Anthropic, Grok, xAI, Codex, local, custom, swap with one line.

Agents live in .agents/, versioned and reviewed like code.

One spec for local runs, GitHub Actions, and sub-agent pipelines.

Declare file, HTTP, MCP, or custom tools directly in the spec.

Tasks delegate to shared specialist specs. Reuse without duplication.

Run from Python (pip) or Node.js (npm). Same YAML, same registry, same results.